If you're searching for the right Instagram video filter, you're usually solving one of two problems: you need a fast, repeatable way to make Reels and Stories look better without burning hours in editing, or you need a branded effect that creators and customers can use and share. Both matter — and both have specific workflows that this guide walks through end to end.

A filter isn't decoration. It's a decision about consistency, speed, and how recognizable your content feels in someone's feed. Whether you're tapping a built-in Reel effect before hitting record or building a custom AR experience for a campaign, the rules below will help you ship something that actually performs.

Quick Answer: How to Apply a Filter to an Instagram Video

To add a filter to an Instagram video:

- Open the Instagram camera (tap the + or swipe right from the feed).

- Swipe right along the bottom to browse effects, or tap Browse Effects to open the full gallery.

- Tap a filter to preview it live on camera.

- Record your clip or tap the gallery icon to upload an existing video — the filter applies either way.

- Tap the smiley-face Save Effect icon to keep the filter in your tray for next time.

For a custom branded filter, build it in Meta Spark AR Studio, submit through the Spark AR Hub, and the effect appears on your Instagram account once approved (typically 5–10 business days).

Filter vs Effect vs AR vs Preset — What's the Difference?

These terms get used interchangeably and it causes a lot of confusion. Here's the clean version:

| Term | What it means | When to use it |

|---|---|---|

| Filter | A color and tone preset (warmth, contrast, saturation) applied to your video | When you want a consistent visual look across posts |

| Effect | Instagram's catch-all term for everything in the Effects Gallery — filters, AR overlays, animations, and interactive triggers | What Instagram's UI actually calls them |

| AR effect | A 3D or interactive overlay that tracks your face, hands, or surroundings (e.g., glasses, frames, particles) | When you want participation or a branded experience |

| Preset | A saved combination of edits applied outside Instagram (Lightroom, VN, CapCut) | When you need exact color control before uploading |

All filters are effects, but not all effects are filters. AR effects are the participation layer; presets live outside Instagram entirely.

Manage All Your Social Accounts Without the Chaos

Schedule posts, track performance, and collaborate with your team.

The Two Worlds of Instagram Video Filters

A client sends over two briefs on the same day. One needs five Reels edited and approved before 3 p.m. The other needs a branded effect for a product launch next month. Both requests sit under the label "Instagram video filter," but they belong to different production tracks, different budgets, and different success metrics.

The first track uses Instagram's built-in video filters and effects to adjust the look of content quickly inside the app. The job is speed and consistency. Social teams use these tools to clean up lighting, steady the visual mood across a content batch, and keep day-to-day publishing from drifting off-brand.

The second track is custom AR. That includes face filters, interactive prompts, branded overlays, object placement, and camera effects people can record with themselves. The job is participation. Instead of styling one post, you create a reusable experience that can spread through creators, customers, or event attendees.

Instagram's filter culture has deep roots. Early filters helped define the app's visual identity, and according to a summary of Instagram's filter history from Georgia Tech, those aesthetic choices shaped how users learned to edit for the platform. AR changed the role of filters again. Meta describes its camera effects ecosystem through Spark AR case studies and platform materials, which show how brands and creators use effects for interactive campaigns rather than simple color treatment.

That distinction matters for client planning.

| Filter type | Best use | Main benefit | Common mistake |

|---|---|---|---|

| Built-in aesthetic filter | Reels, Stories, product clips, talking-head videos | Faster production and a more consistent look | Choosing a trendy preset that fights the footage or skin tones |

| Custom AR effect | Campaigns, launches, events, creator collaborations | Branded participation and repeat use by the audience | Approving a concept that looks clever in review but feels awkward to use on camera |

The trade-off is straightforward. Built-in filters are operational tools. They help a team publish more efficiently, especially when approval cycles are tight and the content only needs visual polish. Custom AR effects are campaign assets. They take concepting, design, testing, publishing, and promotion, but they can extend reach because the audience becomes part of the content.

Use the brief to decide. If the goal is to make this week's content look cleaner and more recognizable, stay inside the native camera workflow. If the goal is to get creators or customers to record themselves with your brand built into the experience, AR is the better choice.

Trend tracking helps, but trend chasing wastes time. Agency teams need to know which looks are gaining traction in Reels, which ones are already saturated, and which ones can be adapted without losing brand consistency. A useful reference point is AI-driven Instagram Reel trend analysis, especially for deciding whether a filter style belongs in ongoing content or a short campaign burst.

Placement matters too. Reels and Stories reward different creative decisions, so the same filter setup will not perform the same way in both. If your team is setting client standards, review the differences between Instagram Reels and Stories before you standardize filter choices.

How to Apply an Instagram Video Filter (Step by Step)

The full applied workflow, with the small details that matter:

Apply a filter before recording

- Open the Instagram camera.

- Swipe right along the bottom effects tray to scroll through filters and AR effects. Each one previews live.

- Tap a filter to lock it in.

- Hold the capture button to record (Reels can record up to the current Reels length cap; Stories cap at 60 seconds per clip).

- Review the preview. If it looks off, tap the X on the filter name to clear it and pick another.

Apply before recording when you want the talent or subject to see the look while performing. Face-tracking effects, beauty filters, and interactive prompts behave differently in front of someone — confidence on camera goes up when the look is visible during the take.

Apply a filter to an existing video

- Open the Instagram camera and tap the gallery icon in the bottom-left.

- Select your clip from the camera roll.

- Swipe through the effects tray to apply a filter on top of your imported footage.

- Tap Next to continue to captions, music, and tagging.

Apply after recording when you want editing flexibility, when you might cross-post to TikTok or Facebook, or when client approvals are still moving. Keeping source footage clean leaves you more options later.

Apply a filter from someone else's Reel or Story

- Tap the effect name shown at the top of any Reel or Story using a filter.

- From the effect detail screen, tap Try it to open the camera with the filter applied, or Save Effect to add it to your tray without using it immediately.

This is how most social managers build a working library. If a video looks great on Tuesday, save the effect on Tuesday — don't try to remember the name on Thursday.

Pro tip: A filter should fix a visual problem or reinforce a brand cue. If it's only there because it looked good on someone else's Reel, it's probably the wrong choice for yours.

How to Save and Reuse Instagram Video Filters

Saved filters live at the front of your effects tray for fast reuse. To save one:

- From the camera: while the filter is active, tap the smiley-face Save Effect icon under the filter name.

- From a Reel or Story: tap the effect name → Save Effect.

- From a creator's profile: open the Effects tab on a creator who builds AR (look for the smiley-face icon on their profile) → tap any effect → Save.

To unsave: open the camera → tap the saved filter → tap the bookmark/save icon again to remove it from your tray.

Build your saved-filter library by content type, not by trend. A few filters for talking-head videos, a few for product shots, a few for mood-driven clips. If you save everything, you'll use nothing consistently.

Best Instagram Video Filters by Content Type

The best built-in Instagram video filter is usually the one no one notices right away. The video just looks cleaner. Product edges are clearer. Skin looks normal. Whites don't turn blue. Food doesn't look gray. That's the standard.

| Content type | Filter family that usually works | Why |

|---|---|---|

| Talking-head Reels | Subtle warm or neutral skin-tone presets | Keeps skin natural, avoids the "blue face" look on indoor light |

| Food and drink | Warm-tone filters that boost saturation slightly | Makes food look fresh without going neon |

| Product demos | Minimal correction with brightness/contrast tweaks | Product color must stay accurate |

| Lifestyle / travel | Higher-contrast film looks | Adds mood without distorting faces |

| Beauty content | Light beauty filters that smooth without erasing texture | Maintains believability — the audience can spot a heavy filter |

| Behind-the-scenes | Native "Normal" or grainy film looks | Feels candid, not over-produced |

| Fitness / athletics | Cooler tones with crisp contrast | Emphasizes definition and movement |

| Founder / B2B | Almost no filter — warmth nudge only | Trust signals matter more than aesthetics |

Use this decision framework when picking the look:

- Service businesses: keep filters subtle. Consultants, coaches, and SaaS brands usually perform better with clean, natural video than heavily stylized effects.

- Fashion, beauty, lifestyle: stronger tonal choices can work, but they still need to preserve texture and true product color.

- Local businesses: prioritize clarity over mood. If you're showing a space, menu item, or staff member, viewers need to trust what they're seeing.

- Creator-led brands: choose a repeatable look that can survive high posting volume. A filter that's hard to match manually becomes a maintenance problem.

Captions matter here too. A polished filter won't save a Reel if people watch on mute and can't follow the point. If you're pairing visual consistency with discoverability, this guide on improving video SEO with accurate subtitling is a practical companion to your filter workflow.

Common mistakes with native filters

| Mistake | Why it hurts | Better move |

|---|---|---|

| Using a different look in every Reel | The brand feels inconsistent | Pick a small set of approved looks |

| Over-brightening faces | Skin loses detail and feels artificial | Keep exposure adjustments modest |

| Using heavy beauty effects on product videos | The product stops looking real | Reserve beauty-style effects for face-led content |

| Copying a trend filter exactly | The content feels borrowed | Adapt the tone, not the whole aesthetic |

Manage All Your Social Accounts Without the Chaos

Schedule posts, track performance, and collaborate with your team.

How to Create a Custom Instagram AR Filter

A client approves a filter concept on Monday. By Friday, the team is still stuck in revisions because the effect looked good in a desktop preview, but felt clumsy on a phone, loaded too slowly, and raised brand questions no one caught early. That is the usual failure pattern with custom AR. The build is only one part of the job. The essential work involves choosing the right scope, building for mobile behavior, and testing the effect like a campaign asset.

For agency work, the best first version is usually small and deliberate. A branded face effect, a campaign overlay, or a light reactive animation is enough to prove the concept, get internal approval faster, and give the client something they can publish without a long explanation.

Start with a lightweight concept

The strongest first builds have one job.

Use that constraint to shape the brief:

- Brand presence: add a logo, frame, product icon, or campaign marker.

- Participation: give users something to wear, trigger, or interact with on camera.

- Utility: make the video more shareable with a subtle visual enhancement.

If a strategist, designer, and client services lead cannot explain the effect in one sentence, the concept needs to be simplified before production starts.

What to do inside Spark AR Studio

Spark AR Studio is still the practical starting point for custom Instagram AR workflows. The interface looks heavy the first time you open it, but early builds only depend on a few areas:

- Viewport: preview the effect

- Scene panel: organize objects

- Assets panel: store imported graphics, audio, and materials

- Patch Editor: connect interactive behavior without writing much code

Meta's Spark AR documentation explains the platform setup, including face tracking and object placement limits, in its official Spark AR learning resources.

A simple first build usually follows this sequence:

-

Install Spark AR Studio and choose a starting template Use a face-tracker template if the effect needs to follow the user's face. That keeps placement stable and reduces setup time.

-

Add a face tracker This gives the effect a reliable anchor. Glasses, labels, stickers, and small branded objects all work better when tied to facial movement instead of fixed screen position.

-

Import a 2D asset Start with a transparent PNG, simple frame, or small icon. Version one is about clarity, not complexity.

-

Add basic logic Use the Patch Editor for motion, expression triggers, or visibility changes. It works like a visual logic builder, which is enough for many branded effects.

A walkthrough video can help if you prefer seeing the interface before building. The clip below shows the full Spark AR setup flow:

The pitfalls that cause avoidable problems

Bad AR filters usually fail because of production discipline, not creativity. The same issues show up in client builds over and over. Assets are too heavy, interaction is unclear, or the effect depends on media behavior that doesn't hold up on mobile networks.

The common failure points are straightforward:

| Pitfall | Result | Better approach |

|---|---|---|

| Large exported file | Slow load time and weak completion rates | Compress textures, remove unused assets, simplify animation |

| Unsupported media setup | Review delays or playback problems | Use asset types and behaviors supported by the platform |

| Screen-fixed elements that should track the face | The effect feels detached or broken | Attach objects to a face tracker or plane tracker |

| Overbuilt version-one concepts | Long revisions and approval friction | Launch a simpler effect first, then expand only if people use it |

External media dependencies deserve extra caution. Meta's creator guidance and community troubleshooting across Spark AR workflows have repeatedly pointed teams toward local, supported assets rather than relying on externally hosted media that can fail under network conditions or review constraints. In agency terms, if the effect depends on something unstable, it's not production-ready.

Performance also affects campaign results. Lenslist's guidance for AR creators on optimizing effect size and mobile performance is a useful reference for keeping assets lighter and more reliable across devices. That's where a lot of client projects win or lose. A filter that looks polished but opens slowly will underperform a simpler one that loads fast and feels responsive.

Practical check: if the effect takes too long to load on average mobile data, cut complexity before you cut media budget.

A good first filter for agencies

For client work, the safest first custom filter usually fits into one of four patterns:

- A subtle branded frame for Stories during an event

- A logo or campaign mark placed near the forehead or cheek

- A small animated icon triggered by a smile, blink, or open mouth

- A seasonal overlay that changes mood without hiding the face

These builds are easier to review internally because they stay close to the campaign message. They also reduce legal and brand risk. Extreme facial distortion, unclear claims, or gimmicky interactions create more approval friction than value for many brands.

The trade-off is creative ambition. A lightweight effect won't feel as novel as a game-like AR experience. But it will ship faster, get approved more easily, and give the team performance data you can use before investing in a larger build.

Testing before submission

Always test on a real phone, preferably more than one. Desktop preview is only a draft environment. It doesn't tell you enough about front-camera framing, touch response, heat, or how the effect feels in a user's hand.

Run this review pass before submission:

- Check face alignment in bright light, low light, and mixed indoor light.

- Test movement while talking, smiling, and turning the head.

- Confirm text legibility on smaller screens.

- Check load time on weaker mobile data if possible.

- Watch device performance on older phones, including battery and heat.

- Review brand details such as logo clarity, color fidelity, and any campaign wording.

This testing step matters even more in an agency workflow because filter production rarely sits alone. The same team may be building the effect, drafting launch Reels, writing captions, and preparing paid support at the same time. If your team uses AI for concepting or campaign prep, this roundup of AI tools for social media marketing is useful for planning around the filter while keeping the AR build itself under manual QA.

The goal is not just to create a filter. It's to produce an effect the client can approve, publish, reuse, and measure without turning a creative experiment into an operations problem.

Publishing and Promoting Your Custom Filter

Creating the filter is the easy part for many teams. The harder part is getting it approved, distributing it cleanly, and making sure people use it after launch.

That work starts with review readiness.

Get the file ready for review

Before uploading to the publishing hub, review the effect like a platform reviewer would.

Check these areas:

- Brand use: logos, product references, and campaign claims need to be accurate and permitted.

- Performance: if the filter feels heavy or unstable on mobile, fix that before submission.

- Visual clarity: the user should understand the effect immediately.

- Policy fit: avoid misleading facial changes, restricted content cues, or anything that could trigger moderation concerns.

A lot of rejections happen because brands treat the review process like a technical upload, when it's really a content review plus a performance review.

What to do once it's approved

After approval, package the launch assets right away so the filter doesn't just sit on the profile.

Your practical distribution kit should include:

-

A demo Reel Show someone using the effect in a normal, human way. Don't make the launch video feel like software documentation.

-

A short Story sequence Show where to find the filter and what it's for.

-

A shareable link or access path Make it easy for creators, internal team members, and partners to test.

-

A creator brief If influencers or staff are using it, give them a one-page instruction set with examples of what fits the campaign.

If the first launch content doesn't demonstrate the filter clearly, most users won't take the extra step to try it.

Promotion ideas that don't feel forced

You don't need a huge campaign to get traction. You need repeated visibility in the places your audience already pays attention to.

A strong rollout often includes:

- Employee or creator seeding: ask a small set of people to post with it first.

- Event tie-ins: launch the filter around a product drop, store opening, webinar, or seasonal push.

- UGC prompts: give people a reason to use it, such as reactions, before-and-after clips, or themed participation.

- Audio pairing: if the Reel promoting the filter uses the right sound, adoption usually feels more native.

If you need help lining up that last piece, this guide to trending audio on Instagram is useful when you're packaging the launch creative.

Reasons custom filters underperform after approval

| Problem | What it looks like | Fix |

|---|---|---|

| Weak launch asset | People see the announcement but don't understand the effect | Publish a clearer demo |

| No creator distribution | Only the brand account uses it | Seed it through staff, creators, and ambassadors |

| Too much friction | Users can't find or recognize it easily | Simplify naming and access |

| Effect is too niche | It only fits one exact scenario | Broaden the use case slightly |

Why Isn't My Instagram Video Filter Working?

If a filter isn't applying, isn't saving, or isn't tracking your face correctly, the cause is usually one of a small set of issues. Match your symptom to the fix:

| Symptom | Likely cause | Fix |

|---|---|---|

| Filter button missing entirely | Outdated app or region rollout | Update Instagram in the App Store / Play Store; restart the app |

| Saved filter has disappeared from your tray | Creator unpublished the effect from Spark AR Hub | Saved filters require the original effect to remain published — find an alternative |

| Filter doesn't track your face | Poor lighting or face partially out of frame | Move to brighter, even lighting; keep your full face in frame |

| Filter looks different on Reels vs Stories | Different aspect ratios change the crop | Test the filter in both formats before committing to one |

| Filter is laggy or causes the camera to freeze | Older device or low memory | Close background apps, restart the phone, try on a newer device |

| Custom AR filter rejected at submission | Asset size, brand misuse, or unsupported media | See Meta's Spark AR Hub review feedback for the specific reason |

| Filter applies but won't export with your video | Effect uses unsupported export behavior | Re-record with the filter active rather than applying after export |

| You can't find a specific filter you saw | Effect was unpublished or is region-locked | Open the original Reel/Story → tap the effect name → Save while it's still live |

If your filtered video is scheduled but never publishes, that's a different problem. See our complete guide on Instagram scheduled posts not working for the full troubleshooting checklist.

Developing a Brand-Consistent Filter Strategy

A client signs off on three Reels in the same week. One uses a warm lifestyle filter, one is flat and clinical, and one adds a playful face effect that fits neither the audience nor the offer. Nothing is technically wrong with any single post. Together, they make the brand feel inconsistent.

That inconsistency shows up in memory before it shows up in reporting. People do not usually comment on filter strategy. They just fail to build a clear impression of the brand.

The broader branding research is much stronger than one-off social anecdotes. Lucidpress reported in its oft-cited brand consistency research that consistent brand presentation can increase revenue by up to 33%, which is why visual treatment deserves the same rules as copy, templates, and posting cadence. Filters sit inside that system. They are not decoration.

For agencies, this is usually an operations problem before it becomes a creative problem. Clients approve posts one by one, creators edit in different apps, and nobody defines what the brand should look like across education content, product demos, founder clips, and campaign creative. The result is drift.

Build a filter family, not a single default

A usable strategy starts with a small set of approved looks tied to specific jobs.

-

Core brand filter The everyday baseline for Reels, recurring series, team updates, and talking-head content.

-

Product-preservation filter A lighter treatment that protects color accuracy, texture, and packaging details. This matters for beauty, food, fashion, and any client with compliance concerns.

-

Campaign filter A temporary variation for launches, events, or seasonal pushes. It should still feel related to the main brand system.

-

Interactive filter A custom AR effect for participation-driven moments. Use it selectively, with a clear campaign role.

This structure keeps teams from overusing one preset until every post looks stale. It also speeds up approvals because each filter already has a purpose.

Keep the visual logic consistent across platforms

The same exact treatment rarely works everywhere. Instagram can carry more polish. TikTok usually performs better when the edit feels closer to native creator content. LinkedIn needs restraint.

What should stay consistent is the visual logic: warmth level, contrast range, skin tone handling, saturation, and how aggressive the effect feels.

| Platform | Filter approach | Why |

|---|---|---|

| Instagram Reels | Polished, brand-forward | Audiences accept stronger visual finishing |

| TikTok | Lighter treatment, still on-brand | Overprocessed edits can feel imported |

| Minimal correction | Clarity and credibility matter more than stylization | |

| Similar to Instagram, slightly softer | Trend tolerance is lower for many brand audiences | |

| X | Clean and readable | Speed and legibility matter most |

Teams that already document format, cadence, and creative themes should place filter rules beside the broader Instagram content strategy framework. That keeps visual choices tied to content goals instead of personal taste.

Create a one-page filter standard

A full brand book is not required. A single working document is usually enough if the team uses it.

Include:

- approved filters by content type

- sample before-and-after frames

- brightness and white balance limits

- rules for skin tones and product color accuracy

- examples of effects that are off-brand

- platform-specific notes for Instagram, TikTok, LinkedIn, Facebook, and X

Add one line for escalation: who decides when footage can break the standard. That saves time during fast-moving launches.

Break the rule on purpose

Good strategy includes exceptions.

Use a different treatment when the source footage is poor and needs correction first, when a trend demands a native in-app look, when product color needs to stay exact, or when a short campaign needs its own visual identity. The key is documenting the exception so it does not subtly become the new default.

This matters even more in agency environments where several editors and account managers touch the same campaign. Strong campaign planning helps, but it only works if visual standards are decided before production starts. For teams building that process, this guide to planning social media campaigns with Mifu is a useful companion.

How to Measure Your Filter's Performance and ROI

A client reviews the monthly report, sees strong Reel reach, and asks a fair question: did the filter help, or did the post work for other reasons? If your team cannot answer that, filter strategy stays in the "creative preference" bucket instead of becoming a repeatable performance tool.

Measure filters at two levels. First, test whether a visual treatment improves the performance of comparable videos. Second, if you built a custom AR effect, track whether people adopted it and whether that usage supported the campaign objective. That distinction matters because a built-in filter and a branded AR effect solve different problems.

Interest in measurement has grown, and you can verify that directly in Google Trends data for Instagram filter analytics searches. On the platform side, Meta has also expanded what brands can analyze through its professional reporting tools and account insights, as outlined in Meta's business help and insights documentation. Those sources support the strategic point without forcing weak attribution onto one Reel link or an unsourced roundup.

What to measure for built-in filters

For native Instagram video filters, keep the test narrow. Compare filtered and non-filtered videos within the same format, audience, and content goal. A founder talking to camera should be compared against similar founder videos, not against product demos or meme edits.

Track these metrics:

-

Completion rate Useful for judging whether the treatment improves watchability or creates friction.

-

Shares and saves Strong for Reels where the look may make the content feel more reference-worthy or more worth passing along.

-

Comment quality Read the comments, don't just count them. Reactions such as "love the color" can be positive, but repeated comments about unnatural skin tones usually signal a creative problem.

-

Cross-platform carryover If the same asset runs on TikTok, Facebook, LinkedIn, or X, log where the treatment still works and where it starts to feel too platform-native to travel well.

A monthly sample is usually enough to spot patterns. One post is noise.

For the actual comparison, hold topic, format, posting window, and CTA as steady as possible, then change the treatment. That doesn't create lab-grade attribution, but it gives clients a clean read on whether the filter choice is helping or hurting.

What to measure for custom AR effects

Custom AR effects need a broader scorecard because the asset itself can generate reach, user content, and brand lift. Instagram's effect insights have changed over time, so always confirm what is currently available in Meta's official reporting before promising a client a specific metric set.

This framework works well:

| Layer | Question | Example interpretation |

|---|---|---|

| Exposure | Did people encounter the effect or content using it? | Useful for awareness and launch campaigns |

| Opens or tries | Did people activate the effect after seeing it? | Good signal that the concept is interesting enough to test |

| Usage | Did people record with it? | Shows the effect is usable, not just noticed |

| Shares | Did those recordings turn into distributed content? | Indicates participation and social spread |

| Business outcome | Did the campaign metric move? | Best read for launches, events, branded UGC, or creator activations |

That final row is where weak reporting usually breaks down. If the campaign goal was trial, sign-up, waitlist growth, store visits, or UGC volume, say that plainly and connect the filter to that outcome. If there is no business tie-in, call the effect what it is: an awareness asset.

If your team needs a cleaner planning model before reporting, planning social media campaigns with Mifu is useful because it forces the creative concept, distribution plan, and success metric into the same workflow.

Reporting filter ROI to clients

Client reports do not need a theory lesson on aesthetics. They need a decision.

A format that holds up well is:

-

State the treatment used Example: warm correction on talking-head Reels, product-accurate grading for demos, branded AR effect during launch week.

-

Show the baseline Use prior comparable posts, a matched control set, or pre-filter performance from the same content series.

-

Highlight one business-relevant result Completion rate, save rate, UGC volume, qualified engagement, or campaign participation.

-

Make the next recommendation Keep it, refine it, limit it to one format, or retire it.

That last step is what turns measurement into workflow. A filter should end each month with a status, not with a vague note that the team "liked the look."

For teams that need better native measurement before layering in filter analysis, this guide to Instagram business account analytics gives the reporting foundation.

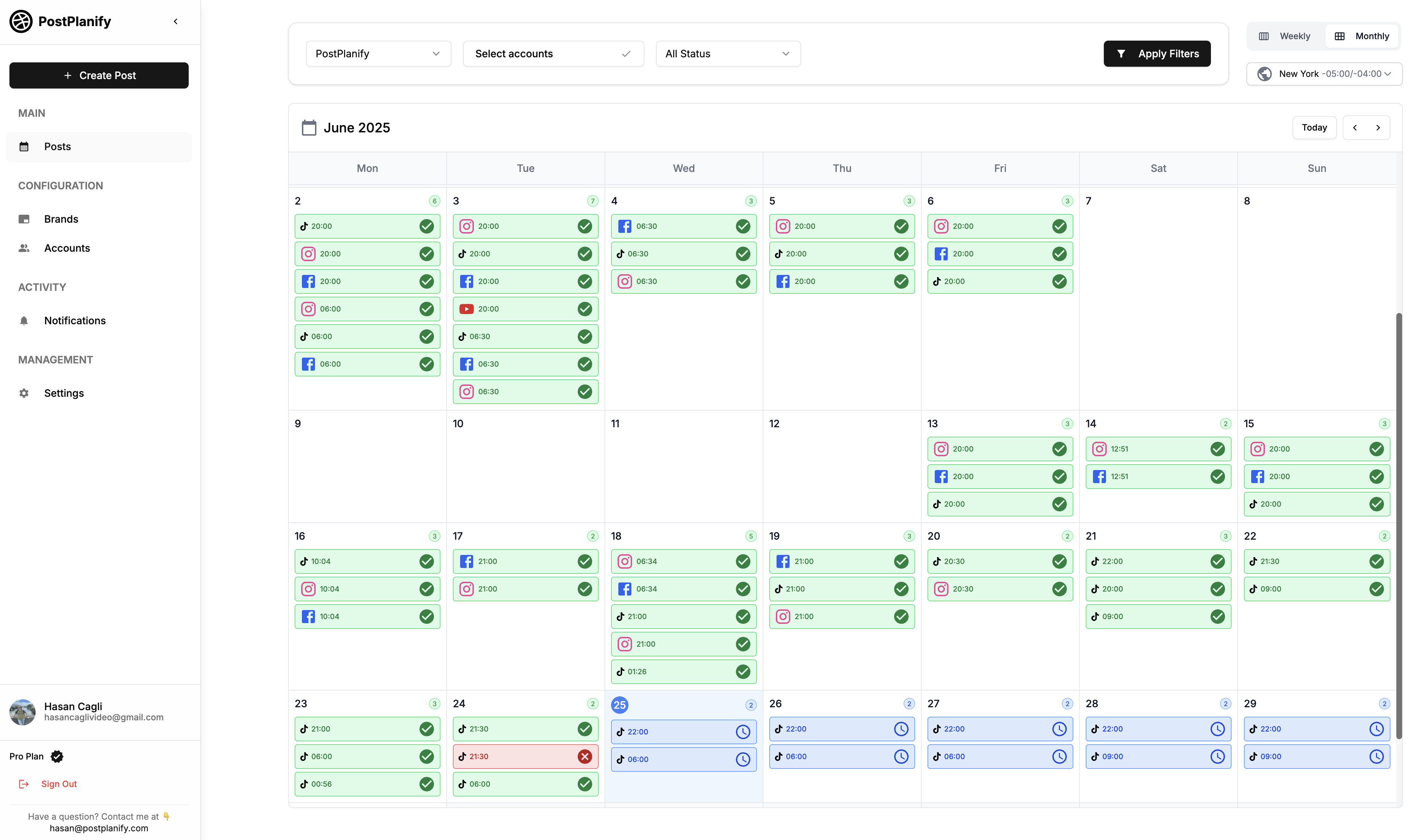

Integrating Instagram Video Filters into Your Workflow with PostPlanify

Most filter strategies fail for operational reasons. The creative decision gets made, but nobody documents it. Editors export different looks. Account managers approve videos in isolation. Clients ask for changes after the Reel is already built. Then the reporting team can't explain what visual treatment was used on which post.

That's why the best filter workflow is boring in the right places. It standardizes naming, storage, approvals, and reporting.

The recommendation side matters too. Instagram uses a multi-stage ranking model for recommendations, and creators who consistently apply a defined filter aesthetic frequently report meaningful engagement gains, summarized in this Reels ranking analysis. Whether every team sees the same outcome will vary, but the strategic takeaway is solid: a consistent visual style helps the platform understand who the content is for.

A practical agency process

Here's the workflow that tends to hold up across multiple client accounts:

-

Define approved filter families per client Give each client a short list. Everyday Reel look, product-safe look, campaign look, and any custom AR effect.

-

Tag assets at upload When a video enters your shared system, label the filter treatment in the file name or notes. If it's raw footage intended for native filtering later, note that too.

-

Add filter instructions to the content calendar Don't assume the publisher remembers. Put the intended Instagram video filter directly in the post notes or checklist.

-

Use approval steps that include the visual treatment Clients often approve caption and thumbnail, then react late to the color treatment. Make the filter part of approval, not an afterthought.

-

Report by content pattern, not post-by-post chaos Group Reels by filter family so your team can tell whether a visual system is working.

Where scheduling helps

Scheduling matters because it turns a visual preference into a repeatable publishing habit.

If you know a client's talking-head Reels should use one consistent aesthetic, scheduling those pieces in batches reduces visual drift. The same applies to launch campaigns with custom effects. You can line up the demo Reel, reminder Story assets, UGC prompts, and follow-up posts instead of improvising every publish day.

A planning system also helps with edge cases:

- Native-only effects: some filters must be applied in-app, so the calendar needs notes for manual finishing steps.

- Account permissions: creators, client stakeholders, and agency staff may not all have access to the same profile tools.

- Cross-platform reuse: filtered Instagram exports may not be the right master asset for TikTok or LinkedIn.

- Review delays: if a custom effect is still pending approval, the launch schedule needs a fallback creative path.

Why teams pick PostPlanify for filter-driven content

If you're managing filtered Reels and Stories across multiple accounts, PostPlanify keeps the production, approval, and reporting cycle in one place — so your filter standard actually gets enforced instead of drifting between editors.

What you get:

- Multi-platform scheduling across Instagram, TikTok, X, Facebook, LinkedIn, YouTube, Threads, Pinterest, Bluesky, and Google Business

- Vision-powered AI assistant that drafts captions and hashtags directly from the visuals you upload — handy when filtered Reels need consistent voice across a batch

- Analytics across every platform with best-time-to-post recommendations, so you can compare filtered vs unfiltered Reel performance side by side

- Social inbox to manage comments, DMs, and mentions in one place after a filter-led campaign drives engagement

- Approval workflows and team collaboration with multi-step client and team review — the filter treatment becomes part of the approval, not a surprise

- White-label PDF reports to show clients which filter family ran on which content, with the performance to match

- Bulk scheduling and a content calendar that keep filtered Reels visually consistent over weeks of output

- Media library to store approved filter references, before/after frames, and brand assets in one shared spot

- Link in bio page to send Reel viewers to a branded landing experience that matches the filter aesthetic

Pricing starts at $79/mo billed yearly (or $99/mo monthly) on the Growth plan, which includes 15 social accounts and 3 team members.

Manage All Your Social Accounts Without the Chaos

Schedule posts, track performance, and collaborate with your team.

Instagram Video Filter FAQ

What is an Instagram video filter?

An Instagram video filter is a visual treatment applied to a video — either a color/tone preset (warmth, contrast, saturation) or an AR overlay (face tracking, animations, branded elements). Filters live inside the Instagram camera's Effects Gallery and can be applied before recording, after recording, or to videos uploaded from your camera roll.

How do I add a filter to a video I already recorded?

Open the Instagram camera, tap the gallery icon in the bottom-left, select your video, then swipe right through the effects tray to apply a filter. You can also upload directly to Reels or Stories and apply the filter from the editor.

How do I save an Instagram filter for later?

While the filter is active in the camera, tap the smiley-face Save Effect icon below the filter name. Saved filters appear at the front of your effects tray, ready to reuse on future videos. To unsave, tap the icon again.

Why can't I find a specific filter I saw in someone else's Reel?

The creator may have unpublished the effect, or the filter may not be available in your region. Try opening the original video where you saw the filter and tapping the effect name at the top — if it's still live, you'll be able to use or save it from there. If the effect was removed by its creator, it's gone for good.

What's the difference between a filter and an effect on Instagram?

Filters are a type of effect. "Effect" is Instagram's catch-all term for anything in the Effects Gallery, including color filters, AR overlays, animations, and interactive triggers. All filters are effects, but not all effects are filters.

Can I create my own Instagram video filter for free?

Yes. Meta Spark AR Studio is free to download and use. You can build a custom AR effect, submit it for review through the Spark AR Hub, and once approved it appears on your Instagram account. The build itself is free; the cost is design, testing, and submission time.

Why isn't my Instagram video filter working?

The most common reasons: an outdated Instagram app, the filter being unpublished by its creator, poor lighting that breaks face tracking, the effect being region-locked, or device performance issues on older phones. Update Instagram, restart the app, and test in better lighting. See the troubleshooting table above for symptom-specific fixes.

Can I use the same filter on Reels and Stories?

Yes — most filters work in both formats. But the aspect ratio differences (full vertical 9:16 vs Stories' similar but cropped frame) can change how the filter looks. Always preview in the format you'll publish to before committing.

Do filtered videos get less reach on Instagram?

No. Instagram's algorithm doesn't penalize filters. What matters is engagement, watch time, and content quality. A heavily filtered video that confuses viewers may underperform — but that's a creative issue, not an algorithmic one.

Can I use Instagram filters on a video already in my camera roll?

Yes. Open the Instagram camera, tap the gallery icon, choose the video, then swipe through effects. The filter applies on top of your existing footage. The same flow works when uploading directly to a Reel or Story.

What happened to face filters on Instagram?

Some third-party AR effects were removed during Meta's 2025 platform changes when Spark AR's external creator program scaled back. Brand-owned and Meta-built effects remain. Custom filters can still be built and published through Spark AR Studio.

Can I use the same Instagram filter on TikTok or Facebook?

The exact filter, no — each platform has its own effects library. But if you record with a filter applied on Instagram and save the video, you can reupload that filtered clip to TikTok, Facebook, or any other platform. For consistency across platforms, apply edits in an editor like CapCut or VN before uploading anywhere.

How long does Spark AR review take for a custom filter?

Typically 5–10 business days. Complex effects or those using brand assets may take longer. Submit well ahead of campaign launch dates and assume at least one round of revisions.

Can I schedule a Reel with a custom AR filter applied?

Yes — once the AR filter is published and you've recorded a Reel using it, the saved video is just a normal Reel and can be scheduled like any other. See our guide on how to schedule Instagram Reels for the full publishing flow.

Quick Checklist Before You Publish a Filtered Video

Run through this before hitting Schedule or Share:

- ✅ Filter matches the content type (talking-head, product, lifestyle, etc.)

- ✅ Tested on a real phone — not just desktop preview

- ✅ Skin tones look natural in the lighting you shot in

- ✅ Product colors stay accurate (especially for beauty, food, fashion)

- ✅ Filter doesn't fight the audio, motion, or caption

- ✅ Saved to your effects tray for reuse on similar content

- ✅ Documented in your filter standard or brand guide

- ✅ Approved by client (if applicable) — including the visual treatment, not just caption and thumbnail

- ✅ Cross-platform export decided (filtered master vs raw master for TikTok/LinkedIn cross-posting)

- ✅ Aspect ratio is right: 9:16 for Reels and Stories, 4:5 to 1.91:1 for feed (see our Instagram image size guide)

Key Takeaways

- Apply quickly: Open the camera → swipe right → tap a filter → record or upload → tap the smiley to save it for next time.

- Filter ≠ Effect: All filters are effects, but the Effects Gallery also includes AR overlays, animations, and interactive triggers — pick the right tool for the job.

- Consistency wins over trends: A small set of approved filters per content type beats chasing every viral aesthetic.

- Custom AR is a campaign asset, not a styling tool: Build it for participation, not polish — and ship a small first version before expanding.

- Test on phones, not desktops: Lighting, framing, load time, and heat behave differently in the hand than in Spark AR's preview.

- Measure at two levels: Native filters → improvement vs comparable posts. AR effects → opens, usage, shares, business outcome.

- Don't lock filters into source footage: Keep a clean master if you cross-post to TikTok, LinkedIn, or X — the same treatment rarely works everywhere.

- Document the standard: A one-page filter guide (approved looks, exceptions, escalation) prevents drift across editors and campaigns.

Related Reading

- How to Schedule Instagram Reels

- How to Schedule Instagram Stories

- Instagram Image Size Guide

- Best Time to Post on Instagram

- How to See Scheduled Posts on Instagram

- Instagram Scheduled Posts Not Working? 10 Quick Fixes

- Instagram Carousel Guide

- Best Instagram Post Scheduler Tools

- How to Schedule Anything on Instagram

- Automating Instagram Posts Safely

- Instagram Content Strategy Framework

- Instagram Business Account Analytics

- Difference Between Reels and Stories

- Trending Audio on Instagram

- Best AI Tools for Social Media Marketing

- Free Instagram Safe Zone Checker

Manage All Your Social Accounts Without the Chaos

Schedule posts, track performance, and collaborate with your team.

About the Author

Hasan Cagli

Founder of PostPlanify, a content and social media scheduling platform. He focuses on building systems that help creators, businesses, and teams plan, publish, and manage content more efficiently across platforms.